To understand

this rigorous proof that there is no one-to-one correspondence betwee integers and points on a line, suppose we encounter a ChartMaker (CM) who wants to try to create this

one-to-one correspondence. He presents a

chart that looks like this:

Integer Point

1

0.0012 …

2

0.0314 …

3

0.1347 …

4

0.2256 …

And so forth, again,

for as long as patience holds out. The decimal numbers of the right don’t have

to go steadily upward toward 1.0, the end point. They can circle back, if the

CM so desires, to pick up points neglected the first time around. And the chart

by hypothesis never ends. So how do we know it must be incomplete?

Presumably CM

has in mind a general rule, and believes that his general rule ensures that

every possible number will show up sooner or later on the right hand side of

the chart. We don’t need to worry about what his general rule might be in order

to establish that it will fail. We can simply create a decimal number that

isn’t on his chart and that won’t be

on his chart.

And that’s

easy, Cantor said. We work across his list diagonally. We write a decimal number

that starts with a digit that is not

0 (not the same digit as that of the first point listed above). Our second

digit is not 3 (not the same as that

of the second digit of the second number on the list). Our third number is not 4, and so forth. Such a number, for

the list with which we started, might begin 0.5227…

Now, how can we

be sure 0.5227… won’t show up later on CM’s chart? Because of the above stated

principle of construction.

After all,

suppose CM says “aha, your 0.5227… was going to be number 432 on my list!”

No, it wasn’t.

Because the point and decimal number that corresponds to integer 432 on his

list would have had some specific digit in its 432d position, (say, 9), and

according to our principle of construction, our hypothetical number would have

had something other than 9 there.

It will always

be possible to foil the pretensions to completeness of CM in this manner. Thus,

it will never be possible for our chart maker to establish a one-to-one

relationship. Thus, again, there is no equivalence between these two sets,

which is what was to be proven. There are at least two distinct orders of

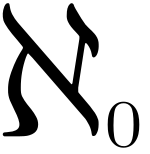

infinity. Cantor called the first order of infinity, that of all integers,

aleph zero, portrayed graphically to the left of this text.

It will always

be possible to foil the pretensions to completeness of CM in this manner. Thus,

it will never be possible for our chart maker to establish a one-to-one

relationship. Thus, again, there is no equivalence between these two sets,

which is what was to be proven. There are at least two distinct orders of

infinity. Cantor called the first order of infinity, that of all integers,

aleph zero, portrayed graphically to the left of this text. The order of all points on a line is a higher infinite number: aleph one. You can continue, with an infinity of infinities.

This is all a bit

mind-blowing, because this notion of a plurality of infinities (which came to

be called "transfinite" numbers, though the distinction between infinite and

transfinite need not concern us here) is at odds with the intuitive (“common

sense”) notion of infinity. Ordinary folk think of “infinity” as a singular

term, and one which -- since by hypothesis it swallows up everything actual and

possible in one huge sum-beyond sums -- cannot be replicated, much less exceeded!

You might say

that Cantor, in trying to reduce numbers to sets, ended up adding new and very strange numbers to the bestiary. It may be

re-assuring to consider that the transfinites are by definition sets, too, so

order of a sort is retained.

Yet there’s a

twist to the story of numbers-as-sets, and it allows us a neat ending to our several posts long discussion of the bestiary of numbers. For it is possible that the

very smart people who worked on set theory in the 19th century were

in an important sense misguided. They may have been mistaken at the very start,

in the idea that our understanding of numbers gains when we start seeing

numbers, and talking about numbers, as the names of sets. Does this hurt or

help? Does it clarify or obscure?

The idea came

under fire early in the 20th century, when Bertrand Russell observed

that there is nothing in set theory that prevents self-reference (the

definition of a set, which we can simply call X, that would have X as a member). Further, once we

introduce self-reference, set theory turns out to be quickly productive of

paradox.

Consider the

set of all sets that don’t include themselves: does it include itself, or not? It must if it doesn't, yet it can't if it does.

The whole idea

of explaining numbers as sets reminds some people of the old story about a

turtle supporting the earth, and another turtle supporting that turtle, and so

forth. The invariable punchline: “It’s turtles all the way down.”

If you accept

the idea that there can be a bottom turtle

in the life of the mind, an idea that does not need to be defined in terms of

other ideas beneath it, a “primitive” idea as some philosophers call it, then

number is a good candidate. Philosopher-historian William Barrett made this

point with great clarity and erudition in his 1979 book, The Illusion of Technique. Yet of course if you treat number as a bottom-turtle kind of idea, then you won't try to stick the idea of sets beneath it.

We learn the

‘counting numbers’ as youngsters, by the example of older people combined as it

is with our instinct for imitation. We don’t learn this with the benefit of

anything more fundamental, and we have no need to fill in such a fundament

later in life.

These counting

numbers become the template for the broader idea of number. As we’ve seen in

this chapter, that broader idea can become, has historically become, a much broader idea, but it is always by

extension from, or analogy to, or family resemblance with this primitive

template that it grows. We don’t need set theory for any of it.

Comments

Post a Comment